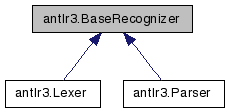

antlr3.BaseRecognizer Class Reference

Common recognizer functionality. More...

Public Member Functions | |

| def | __init__ |

| def | setInput |

| def | reset |

| reset the parser's state; subclasses must rewinds the input stream | |

| def | match |

| Match current input symbol against ttype. | |

| def | matchAny |

| Match the wildcard: in a symbol. | |

| def | mismatchIsUnwantedToken |

| def | mismatchIsMissingToken |

| def | reportError |

| Report a recognition problem. | |

| def | displayRecognitionError |

| def | getErrorMessage |

| What error message should be generated for the various exception types? | |

| def | getNumberOfSyntaxErrors |

| Get number of recognition errors (lexer, parser, tree parser). | |

| def | getErrorHeader |

| What is the error header, normally line/character position information? | |

| def | getTokenErrorDisplay |

| How should a token be displayed in an error message? The default is to display just the text, but during development you might want to have a lot of information spit out. | |

| def | emitErrorMessage |

| Override this method to change where error messages go. | |

| def | recover |

| Recover from an error found on the input stream. | |

| def | beginResync |

| A hook to listen in on the token consumption during error recovery. | |

| def | endResync |

| A hook to listen in on the token consumption during error recovery. | |

| def | computeErrorRecoverySet |

| Compute the error recovery set for the current rule. | |

| def | computeContextSensitiveRuleFOLLOW |

| Compute the context-sensitive FOLLOW set for current rule. | |

| def | combineFollows |

| def | recoverFromMismatchedToken |

| Attempt to recover from a single missing or extra token. | |

| def | recoverFromMismatchedSet |

| Not currently used. | |

| def | getCurrentInputSymbol |

| Match needs to return the current input symbol, which gets put into the label for the associated token ref; e.g., x=ID. | |

| def | getMissingSymbol |

| Conjure up a missing token during error recovery. | |

Public Attributes | |

| input | |

Static Public Attributes | |

| int | MEMO_RULE_FAILED = 2 |

| int | MEMO_RULE_UNKNOWN = 1 |

| DEFAULT_TOKEN_CHANNEL = DEFAULT_CHANNEL | |

| HIDDEN = HIDDEN_CHANNEL | |

| tokenNames = None | |

| tuple | antlr_version = (3, 0, 1, 0) |

| string | antlr_version_str = "3.0.1" |

Private Attributes | |

| _state | |

| State of a lexer, parser, or tree parser are collected into a state object so the state can be shared. | |

Detailed Description

Common recognizer functionality.A generic recognizer that can handle recognizers generated from lexer, parser, and tree grammars. This is all the parsing support code essentially; most of it is error recovery stuff and backtracking.

Definition at line 2539 of file antlr3.py.

Member Function Documentation

| def antlr3.BaseRecognizer.__init__ | ( | self, | ||

state = None | ||||

| ) |

| def antlr3.BaseRecognizer.reset | ( | self | ) |

reset the parser's state; subclasses must rewinds the input stream

Reimplemented in antlr3.Lexer, and antlr3.Parser.

| def antlr3.BaseRecognizer.match | ( | self, | ||

| input, | ||||

| ttype, | ||||

| follow | ||||

| ) |

Match current input symbol against ttype.

Attempt single token insertion or deletion error recovery. If that fails, throw MismatchedTokenException.

To turn off single token insertion or deletion error recovery, override recoverFromMismatchedToken() and have it throw an exception. See TreeParser.recoverFromMismatchedToken(). This way any error in a rule will cause an exception and immediate exit from rule. Rule would recover by resynchronizing to the set of symbols that can follow rule ref.

| def antlr3.BaseRecognizer.matchAny | ( | self, | ||

| input | ||||

| ) |

| def antlr3.BaseRecognizer.mismatchIsUnwantedToken | ( | self, | ||

| input, | ||||

| ttype | ||||

| ) |

| def antlr3.BaseRecognizer.mismatchIsMissingToken | ( | self, | ||

| input, | ||||

| follow | ||||

| ) |

| def antlr3.BaseRecognizer.reportError | ( | self, | ||

| e | ||||

| ) |

Report a recognition problem.

This method sets errorRecovery to indicate the parser is recovering not parsing. Once in recovery mode, no errors are generated. To get out of recovery mode, the parser must successfully match a token (after a resync). So it will go:

1. error occurs 2. enter recovery mode, report error 3. consume until token found in resynch set 4. try to resume parsing 5. next match() will reset errorRecovery mode

If you override, make sure to update syntaxErrors if you care about that.

Reimplemented in antlr3.Lexer.

| def antlr3.BaseRecognizer.displayRecognitionError | ( | self, | ||

| tokenNames, | ||||

| e | ||||

| ) |

| def antlr3.BaseRecognizer.getErrorMessage | ( | self, | ||

| e, | ||||

| tokenNames | ||||

| ) |

What error message should be generated for the various exception types?

Not very object-oriented code, but I like having all error message generation within one method rather than spread among all of the exception classes. This also makes it much easier for the exception handling because the exception classes do not have to have pointers back to this object to access utility routines and so on. Also, changing the message for an exception type would be difficult because you would have to subclassing exception, but then somehow get ANTLR to make those kinds of exception objects instead of the default. This looks weird, but trust me--it makes the most sense in terms of flexibility.

For grammar debugging, you will want to override this to add more information such as the stack frame with getRuleInvocationStack(e, this.getClass().getName()) and, for no viable alts, the decision description and state etc...

Override this to change the message generated for one or more exception types.

Reimplemented in antlr3.Lexer.

| def antlr3.BaseRecognizer.getNumberOfSyntaxErrors | ( | self | ) |

Get number of recognition errors (lexer, parser, tree parser).

Each recognizer tracks its own number. So parser and lexer each have separate count. Does not count the spurious errors found between an error and next valid token match

See also reportError()

| def antlr3.BaseRecognizer.getErrorHeader | ( | self, | ||

| e | ||||

| ) |

| def antlr3.BaseRecognizer.getTokenErrorDisplay | ( | self, | ||

| t | ||||

| ) |

How should a token be displayed in an error message? The default is to display just the text, but during development you might want to have a lot of information spit out.

Override in that case to use t.toString() (which, for CommonToken, dumps everything about the token). This is better than forcing you to override a method in your token objects because you don't have to go modify your lexer so that it creates a new Java type.

| def antlr3.BaseRecognizer.emitErrorMessage | ( | self, | ||

| msg | ||||

| ) |

| def antlr3.BaseRecognizer.recover | ( | self, | ||

| input, | ||||

| re | ||||

| ) |

Recover from an error found on the input stream.

This is for NoViableAlt and mismatched symbol exceptions. If you enable single token insertion and deletion, this will usually not handle mismatched symbol exceptions but there could be a mismatched token that the match() routine could not recover from.

| def antlr3.BaseRecognizer.beginResync | ( | self | ) |

| def antlr3.BaseRecognizer.endResync | ( | self | ) |

| def antlr3.BaseRecognizer.computeErrorRecoverySet | ( | self | ) |

Compute the error recovery set for the current rule.

During rule invocation, the parser pushes the set of tokens that can follow that rule reference on the stack; this amounts to computing FIRST of what follows the rule reference in the enclosing rule. This local follow set only includes tokens from within the rule; i.e., the FIRST computation done by ANTLR stops at the end of a rule.

EXAMPLE

When you find a "no viable alt exception", the input is not consistent with any of the alternatives for rule r. The best thing to do is to consume tokens until you see something that can legally follow a call to r *or* any rule that called r. You don't want the exact set of viable next tokens because the input might just be missing a token--you might consume the rest of the input looking for one of the missing tokens.

Consider grammar:

a : '[' b ']' | '(' b ')' ; b : c '^' INT ; c : ID | INT ;

At each rule invocation, the set of tokens that could follow that rule is pushed on a stack. Here are the various "local" follow sets:

FOLLOW(b1_in_a) = FIRST(']') = ']' FOLLOW(b2_in_a) = FIRST(')') = ')' FOLLOW(c_in_b) = FIRST('^') = '^'

Upon erroneous input "[]", the call chain is

a -> b -> c

and, hence, the follow context stack is:

depth local follow set after call to rule 0 <EOF> a (from main()) 1 ']' b 3 '^' c

Notice that ')' is not included, because b would have to have been called from a different context in rule a for ')' to be included.

For error recovery, we cannot consider FOLLOW(c) (context-sensitive or otherwise). We need the combined set of all context-sensitive FOLLOW sets--the set of all tokens that could follow any reference in the call chain. We need to resync to one of those tokens. Note that FOLLOW(c)='^' and if we resync'd to that token, we'd consume until EOF. We need to sync to context-sensitive FOLLOWs for a, b, and c: {']','^'}. In this case, for input "[]", LA(1) is in this set so we would not consume anything and after printing an error rule c would return normally. It would not find the required '^' though. At this point, it gets a mismatched token error and throws an exception (since LA(1) is not in the viable following token set). The rule exception handler tries to recover, but finds the same recovery set and doesn't consume anything. Rule b exits normally returning to rule a. Now it finds the ']' (and with the successful match exits errorRecovery mode).

So, you cna see that the parser walks up call chain looking for the token that was a member of the recovery set.

Errors are not generated in errorRecovery mode.

ANTLR's error recovery mechanism is based upon original ideas:

"Algorithms + Data Structures = Programs" by Niklaus Wirth

and

"A note on error recovery in recursive descent parsers": http://portal.acm.org/citation.cfm?id=947902.947905

Later, Josef Grosch had some good ideas:

"Efficient and Comfortable Error Recovery in Recursive Descent Parsers": ftp://www.cocolab.com/products/cocktail/doca4.ps/ell.ps.zip

Like Grosch I implemented local FOLLOW sets that are combined at run-time upon error to avoid overhead during parsing.

| def antlr3.BaseRecognizer.computeContextSensitiveRuleFOLLOW | ( | self | ) |

Compute the context-sensitive FOLLOW set for current rule.

This is set of token types that can follow a specific rule reference given a specific call chain. You get the set of viable tokens that can possibly come next (lookahead depth 1) given the current call chain. Contrast this with the definition of plain FOLLOW for rule r:

FOLLOW(r)={x | S=>*alpha r beta in G and x in FIRST(beta)}

where x in T* and alpha, beta in V*; T is set of terminals and V is the set of terminals and nonterminals. In other words, FOLLOW(r) is the set of all tokens that can possibly follow references to r in *any* sentential form (context). At runtime, however, we know precisely which context applies as we have the call chain. We may compute the exact (rather than covering superset) set of following tokens.

For example, consider grammar:

stat : ID '=' expr ';' // FOLLOW(stat)=={EOF} | "return" expr '.' ; expr : atom ('+' atom)* ; // FOLLOW(expr)=={';','.',')'} atom : INT // FOLLOW(atom)=={'+',')',';','.'} | '(' expr ')' ;

The FOLLOW sets are all inclusive whereas context-sensitive FOLLOW sets are precisely what could follow a rule reference. For input input "i=(3);", here is the derivation:

stat => ID '=' expr ';' => ID '=' atom ('+' atom)* ';' => ID '=' '(' expr ')' ('+' atom)* ';' => ID '=' '(' atom ')' ('+' atom)* ';' => ID '=' '(' INT ')' ('+' atom)* ';' => ID '=' '(' INT ')' ';'

At the "3" token, you'd have a call chain of

stat -> expr -> atom -> expr -> atom

What can follow that specific nested ref to atom? Exactly ')' as you can see by looking at the derivation of this specific input. Contrast this with the FOLLOW(atom)={'+',')',';','.'}.

You want the exact viable token set when recovering from a token mismatch. Upon token mismatch, if LA(1) is member of the viable next token set, then you know there is most likely a missing token in the input stream. "Insert" one by just not throwing an exception.

| def antlr3.BaseRecognizer.recoverFromMismatchedToken | ( | self, | ||

| input, | ||||

| ttype, | ||||

| follow | ||||

| ) |

Attempt to recover from a single missing or extra token.

EXTRA TOKEN

LA(1) is not what we are looking for. If LA(2) has the right token, however, then assume LA(1) is some extra spurious token. Delete it and LA(2) as if we were doing a normal match(), which advances the input.

MISSING TOKEN

If current token is consistent with what could come after ttype then it is ok to 'insert' the missing token, else throw exception For example, Input 'i=(3;' is clearly missing the ')'. When the parser returns from the nested call to expr, it will have call chain:

stat -> expr -> atom

and it will be trying to match the ')' at this point in the derivation:

=> ID '=' '(' INT ')' ('+' atom)* ';' ^ match() will see that ';' doesn't match ')' and report a mismatched token error. To recover, it sees that LA(1)==';' is in the set of tokens that can follow the ')' token reference in rule atom. It can assume that you forgot the ')'.

| def antlr3.BaseRecognizer.recoverFromMismatchedSet | ( | self, | ||

| input, | ||||

| e, | ||||

| follow | ||||

| ) |

| def antlr3.BaseRecognizer.getCurrentInputSymbol | ( | self, | ||

| input | ||||

| ) |

Match needs to return the current input symbol, which gets put into the label for the associated token ref; e.g., x=ID.

Token and tree parsers need to return different objects. Rather than test for input stream type or change the IntStream interface, I use a simple method to ask the recognizer to tell me what the current input symbol is.

This is ignored for lexers.

Reimplemented in antlr3.Parser.

| def antlr3.BaseRecognizer.getMissingSymbol | ( | self, | ||

| input, | ||||

| e, | ||||

| expectedTokenType, | ||||

| follow | ||||

| ) |

Conjure up a missing token during error recovery.

The recognizer attempts to recover from single missing symbols. But, actions might refer to that missing symbol. For example, x=ID {f($x);}. The action clearly assumes that there has been an identifier matched previously and that $x points at that token. If that token is missing, but the next token in the stream is what we want we assume that this token is missing and we keep going. Because we have to return some token to replace the missing token, we have to conjure one up. This method gives the user control over the tokens returned for missing tokens. Mostly, you will want to create something special for identifier tokens. For literals such as '{' and ',', the default action in the parser or tree parser works. It simply creates a CommonToken of the appropriate type. The text will be the token. If you change what tokens must be created by the lexer, override this method to create the appropriate tokens.

Reimplemented in antlr3.Parser.

Member Data Documentation

int antlr3.BaseRecognizer.MEMO_RULE_FAILED = 2 [static] |

int antlr3.BaseRecognizer.MEMO_RULE_UNKNOWN = 1 [static] |

antlr3.BaseRecognizer.HIDDEN = HIDDEN_CHANNEL [static] |

antlr3.BaseRecognizer.tokenNames = None [static] |

tuple antlr3.BaseRecognizer.antlr_version = (3, 0, 1, 0) [static] |

string antlr3.BaseRecognizer.antlr_version_str = "3.0.1" [static] |

antlr3.BaseRecognizer._state [private] |

State of a lexer, parser, or tree parser are collected into a state object so the state can be shared.

This sharing is needed to have one grammar import others and share same error variables and other state variables. It's a kind of explicit multiple inheritance via delegation of methods and shared state.

The documentation for this class was generated from the following file:

1.5.5

1.5.5