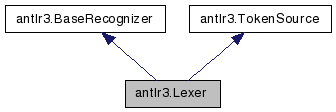

antlr3.Lexer Class Reference

Baseclass for generated lexer classes. More...

Public Member Functions | |

| def | __init__ |

| def | reset |

| reset the parser's state; subclasses must rewinds the input stream | |

| def | nextToken |

| Return a token from this source; i.e., match a token on the char stream. | |

| def | skip |

| Instruct the lexer to skip creating a token for current lexer rule and look for another token. | |

| def | mTokens |

| This is the lexer entry point that sets instance var 'token'. | |

| def | setCharStream |

| Set the char stream and reset the lexer. | |

| def | getSourceName |

| def | emit |

| The standard method called to automatically emit a token at the outermost lexical rule. | |

| def | match |

| def | matchAny |

| def | matchRange |

| def | getLine |

| def | getCharPositionInLine |

| def | getCharIndex |

| What is the index of the current character of lookahead? | |

| def | getText |

| Return the text matched so far for the current token or any text override. | |

| def | setText |

| Set the complete text of this token; it wipes any previous changes to the text. | |

| def | reportError |

| Report a recognition problem. | |

| def | getErrorMessage |

| What error message should be generated for the various exception types? | |

| def | getCharErrorDisplay |

| def | recover |

| Lexers can normally match any char in it's vocabulary after matching a token, so do the easy thing and just kill a character and hope it all works out. | |

| def | traceIn |

| def | traceOut |

Public Attributes | |

| input | |

Static Public Attributes | |

| tuple | text = property(getText, setText) |

Detailed Description

Baseclass for generated lexer classes.A lexer is recognizer that draws input symbols from a character stream. lexer grammars result in a subclass of this object. A Lexer object uses simplified match() and error recovery mechanisms in the interest of speed.

Definition at line 3506 of file antlr3.py.

Member Function Documentation

| def antlr3.Lexer.reset | ( | self | ) |

reset the parser's state; subclasses must rewinds the input stream

Reimplemented from antlr3.BaseRecognizer.

| def antlr3.Lexer.nextToken | ( | self | ) |

Return a token from this source; i.e., match a token on the char stream.

Reimplemented from antlr3.TokenSource.

| def antlr3.Lexer.skip | ( | self | ) |

Instruct the lexer to skip creating a token for current lexer rule and look for another token.

nextToken() knows to keep looking when a lexer rule finishes with token set to SKIP_TOKEN. Recall that if token==null at end of any token rule, it creates one for you and emits it.

| def antlr3.Lexer.mTokens | ( | self | ) |

| def antlr3.Lexer.setCharStream | ( | self, | ||

| input | ||||

| ) |

| def antlr3.Lexer.emit | ( | self, | ||

token = None | ||||

| ) |

The standard method called to automatically emit a token at the outermost lexical rule.

The token object should point into the char buffer start..stop. If there is a text override in 'text', use that to set the token's text. Override this method to emit custom Token objects.

If you are building trees, then you should also override Parser or TreeParser.getMissingSymbol().

| def antlr3.Lexer.getCharIndex | ( | self | ) |

| def antlr3.Lexer.getText | ( | self | ) |

| def antlr3.Lexer.setText | ( | self, | ||

| text | ||||

| ) |

| def antlr3.Lexer.reportError | ( | self, | ||

| e | ||||

| ) |

Report a recognition problem.

This method sets errorRecovery to indicate the parser is recovering not parsing. Once in recovery mode, no errors are generated. To get out of recovery mode, the parser must successfully match a token (after a resync). So it will go:

1. error occurs 2. enter recovery mode, report error 3. consume until token found in resynch set 4. try to resume parsing 5. next match() will reset errorRecovery mode

If you override, make sure to update syntaxErrors if you care about that.

Reimplemented from antlr3.BaseRecognizer.

| def antlr3.Lexer.getErrorMessage | ( | self, | ||

| e, | ||||

| tokenNames | ||||

| ) |

What error message should be generated for the various exception types?

Not very object-oriented code, but I like having all error message generation within one method rather than spread among all of the exception classes. This also makes it much easier for the exception handling because the exception classes do not have to have pointers back to this object to access utility routines and so on. Also, changing the message for an exception type would be difficult because you would have to subclassing exception, but then somehow get ANTLR to make those kinds of exception objects instead of the default. This looks weird, but trust me--it makes the most sense in terms of flexibility.

For grammar debugging, you will want to override this to add more information such as the stack frame with getRuleInvocationStack(e, this.getClass().getName()) and, for no viable alts, the decision description and state etc...

Override this to change the message generated for one or more exception types.

Reimplemented from antlr3.BaseRecognizer.

| def antlr3.Lexer.recover | ( | self, | ||

| re | ||||

| ) |

Lexers can normally match any char in it's vocabulary after matching a token, so do the easy thing and just kill a character and hope it all works out.

You can instead use the rule invocation stack to do sophisticated error recovery if you are in a fragment rule.

Member Data Documentation

tuple antlr3.Lexer.text = property(getText, setText) [static] |

The documentation for this class was generated from the following file:

1.5.5

1.5.5